Yesterday, Apple announced iOS7.0.6, a critical security update for iOS7 — and an update for OSX / Safari is likely to follow soon (if you haven’t updated iOS yet, do it now).

The problem turns out to be caused by a seemingly simple programming error, now widely discussed as #gotofail on Twitter.

What can we, as software engineers and educators of software engineers, learn from this high impact bug?

The Code

A careful analysis of the underlying problem is provided by Adam Langley. The root cause is in the following code:

static OSStatus

SSLVerifySignedServerKeyExchange(SSLContext *ctx, bool isRsa, SSLBuffer signedParams,

uint8_t *signature, UInt16 signatureLen)

{

OSStatus err;

...

if ((err = SSLHashSHA1.update(&hashCtx, &serverRandom)) != 0)

goto fail;

if ((err = SSLHashSHA1.update(&hashCtx, &signedParams)) != 0)

goto fail;

goto fail;

if ((err = SSLHashSHA1.final(&hashCtx, &hashOut)) != 0)

goto fail;

...

fail:

SSLFreeBuffer(&signedHashes);

SSLFreeBuffer(&hashCtx);

return err;

}

To any software engineer, the two consecutive goto fail lines will be suspicious. They are, and more than that. To quote Adam Langley:

The code will always jump to the end from that second goto, err will contain a successful value because the SHA1 update operation was successful and so the signature verification will never fail.

Not verifying a signature is exploitable. In the upcoming months, there will remain plenty of devices running older versions of iOS and MacOS. These will remain vulnerable, and epxloitable.

Brittle Software Engineering

When first seeing this code, I was once again caught by how incredibly brittle programming is. Just adding a single line of code can bring a system to its knees.

For seasoned software engineers this will not be a real surprise. But students and aspiring software engineers will have a hard time believing it. Therefore, sharing problems like this is essential, in order to create sufficient awareness among students that code quality matters.

Code Formatting is a Security Feature

When reviewing code, I try to be picky on details, including white spaces, tabs, and new lines. Not everyone likes me for that. I often wondered whether I was just annoyingly pedantic, or whether it was the right thing to do.

The case at hand shows that white space is a security concern. The correct indentation immediately shows something fishy is going on, as the final check now has become unreachable:

if ((err = SSLHashSHA1.update(&hashCtx, &signedParams)) != 0)

goto fail;

goto fail;

if ((err = SSLHashSHA1.final(&hashCtx, &hashOut)) != 0)

goto fail;

Insisting on curly braces would hightlight the fault even more:

if ((err = SSLHashSHA1.update(&hashCtx, &signedParams)) != 0) {

goto fail;

}

goto fail;

if ((err = SSLHashSHA1.final(&hashCtx, &hashOut)) != 0)

goto fail;

Indentation Must be Automatic

Because code formatting is a security feature, we must not do it by hand, but use tools to get the indentation right automatically.

A quick inspection of the sslKeyExchange.c source code reveals that it is not routinely formatted automatically: There are plenty of inconsistent spaces, tabs, and code in comments. With modern tools, such as Eclipse-format-on-save, one would not be able to save code like this.

Yet just forcing developers to use formating tools may not be enough. We must also invest in improving the quality of such tools. In some cases, hand-made layout can make a substantial difference in understandability of code. Perhaps current tools do not sufficiently acknowledge such needs, leading to under-use of today’s formatting tools.

Code Review to the Rescue?

Besides automated code formatting, critical reviews might also help. In the words of Adam Langley:

Code review can be effective against these sorts of bug. Not just auditing, but review of each change as it goes in. I’ve no idea what the code review culture is like at Apple but I strongly believe that my colleagues, Wan-Teh or Ryan Sleevi, would have caught it had I slipped up like this. Although not everyone can be blessed with folks like them.

While I fully subscribe to the importance of reviews, a word of caution is at place. My colleague Alberto Bacchelli has investigated how code review is applied today at Microsoft. His findings (published as a paper at ICSE 2013, and nicely summarized by Alex Nederlof as The Truth About Code Reviews) include:

There is a mismatch between the expectations and the actual outcomes of code reviews. From our study, review does not result in identifying defects as often as project members would like and even more rarely detects deep, subtle, or “macro” level issues. Relying on code review in this way for quality assurance may be fraught.

Automated Checkers to the Rescue?

If manual reviews won’t find the problem, perhaps tools can find it? Indeed, the present mistake is a simple example of a problem caused by unreachable code. Any computer science student will be able to write a (basic) “unreachable code detector” that would warn about the unguarded goto fail followed by more (unreachable) code (assuming parsing C is a ‘solved problem’).

Therefore, it is no surprise that plenty of commercial and open source tools exist to check for such problems automatically: Even using the compiler with the right options (presumably -Weverything for Clang) would warn about this problem.

Here, again, the key question is why such tools are not applied. The big problem with tools like these is their lack of precision, leading to too many false alarms. Forcing developers to wade through long lists of irrelevant warnings will do little to prevent bugs like these.

Unfortunately, this lack of precision is a direct consequence of the fact that unreachable code detection (like many other program properties of interest) is a fundamentally undecidable problem. As such, an analysis always needs to make a tradeoff between completeness (covering all suspicious cases) and precision (covering only cases that are certainly incorrect).

To understand the sweet spot in this trade off, more research is needed, both concerning the types of errors that are likely to occur, and concerning techniques to discover them automatically.

Testing is a Security Concern

As an advocate of unit testing, I wonder how the code could have passed a unit test suite.

Unfortunately, the testability of the problematic source code is very poor. In the current code, functions are long, and they cover many cases in different conditional branches. This makes it hard to invoke specific behavior, and bring the functions in a state in which the given behavior can be tested. Furthermore, observability is low, especially since parts of the code deliberately obfuscate results to protect against certain times of attacks.

Thus, given the current code structure, unit testing will be difficult. Nevertheless, the full outcome can be tested, albeit it at the system (not unit) level. Quoting Adam Langley again:

I coded up a very quick test site at https://www.imperialviolet.org:1266. […] If you can load an HTTPS site on port 1266 then you have this bug.

In other words, while the code may be hard to unit test, the system luckily has well defined behavior that can be tested.

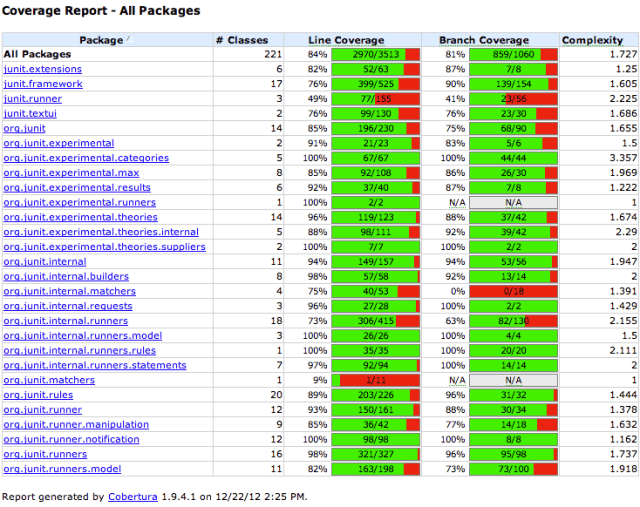

Coverage Analysis

Once there is a set of (system or unit level) tests, the coverage of these tests can be used an indicator for (the lack of) completeness of the test suite.

For the bug at hand, even the simplest form of coverage analysis, namely line coverage, would have helped to spot the problem: Since the problematic code results from unreachable code, there is no way to achieve 100% line coverage.

Therefore, any serious attempt to achieve full statement coverage should have revealed this bug.

Note that trying to achieve full statement coverage, especially for security or safety critical code, is not a strange thing to require. For aviation software, statement coverage is required for criticality “level C”:

Level C:

Software whose anomalous behavior, as shown by the system safety assessment process, would cause or contribute to a failure of system function resulting in a major failure condition for the aircraft.

This is one of the weaker categories: For the catastrophic ‘level A’, even stronger test coverage criteria are required. Thus, achieving substantial line coverage is neither impossible nor uncommon.

The Return-Error-Code Idiom

Finally, looking at the full sources of the affected file, one of the key things to notice is the use of the return code idiom to mimic exception handling.

Since C has no built-in exception handling support, a common idiom is to insist that every function returns an error code. Subsequently, every caller must check this returned code, and include an explicit jump to the error handling functionality if the result is not OK.

This is exactly what is done in the present code: The global err variable is set, checked, and returned for every function call, and if not OK followed by (hopefully exactly one) goto fail.

Almost 10 years ago, together with Magiel Bruntink and Tom Tourwe, we conducted a detailed empirical analysis of this idiom in a large code base of an embedded system.

One of the key findings of our paper is that in the code we analyzed we found a defect density of 2.1 deviations from the return code idiom per 1000 lines of code. Thus, in spite of very strict guidelines at the organization involved, we found many examples of not just unchecked calls, but also incorrectly propagated return codes, or incorrectly handled error conditions.

Based on that, we concluded:

The idiom is particularly error prone, due to the fact that it is omnipresent as well as highly tangled, and requires focused and well-thought programming.

It is sad to see our results from 2005 confirmed today.

© Arie van Deursen, February 22, 2014.

EDIT February 24, 2014: Fixed incorrect use of ‘dead code detection’ terminology into correct ‘unreachable code detection’, and weakened the claim that any computer science student can write such a detector based on the reddit discussion.