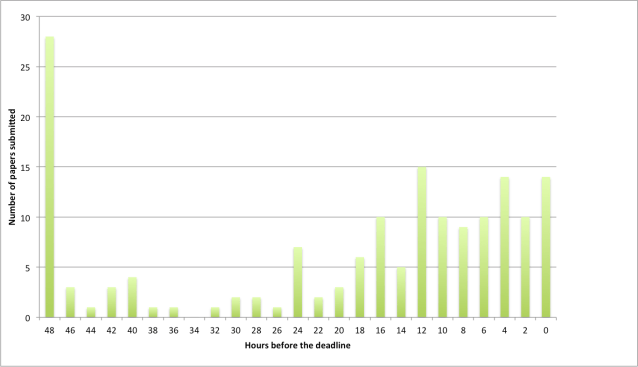

In unit testing, achieving 100% statement coverage is not realistic. But what percentage would good testers get? Which cases are typically not included? Is it important to actually measure coverage?

To answer questions like these, I took a look at the test suite of JUnit itself. This is an interesting case, since it is created by some of the best developers around the world, who care a about testing. If they decide not to test a given case, what can we learn from that?

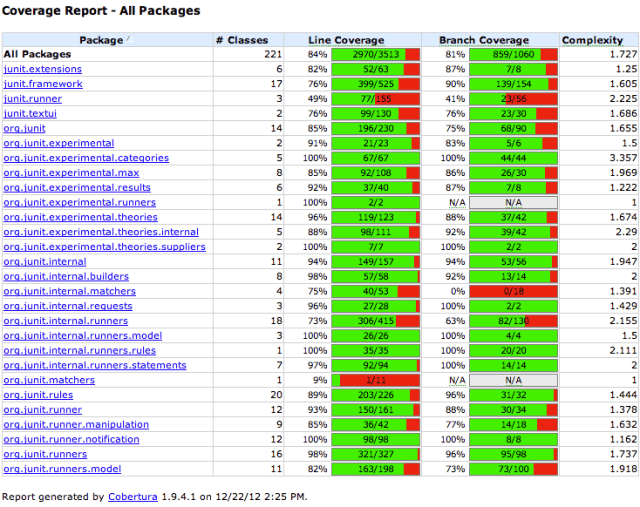

Overall Coverage at First Sight

Applying Cobertura to the 600+ test cases of JUnit leads to the results shown above (I used the maven-enabled version of JUnit 4.11). Overall, instruction coverage is almost 85%. In total, JUnit comprises around 13,000 lines of code and 15,000 lines of test code (both counted with wc). Thus, the test suite that is larger than the framework, leaves around 15% of the code untouched.

Covering Deprecated Code?

A first finding is that in JUnit coverage of deprecated code tends be to be lower. Junit 4.11 contains 13 deprecated classes (more than 10% of the code base), which achieve only 65% line coverage.

JUnit includes another dozen or so deprecated methods spread over different classes. These tend to be small methods (just forwarding a call), which often are not tested.

Furthermore, JUnit 4.11 includes both the modern org.junit.* packages as well as the older junit.* packages from 3.8.x. These older packages constitute ~20% of the code base. Their coverage is 70%, whereas the newer packages have a coverage of almost 90%.

This lower coverage for deprecated code is somewhat surprising, since in a test-driven development process you would expect good coverage of code before it gets deprecated. The underlying mechanism may be that after deprecation there is no incentive to maintain the test cases: If I would issue a ticket to improve the test cases for a deprecated method on JUnit I suspect this issue would not get a high priority. (This calls for some repository mining research on deprecation and testing, in the spirit of our work on co-evolution of tests and code).

Another implication is that when configuring coverage tools, it may be worth excluding deprecated code from analysis. A coverage tool that can recognize @Deprecated tags would be ideal, but I am not aware of such a tool. If excluding deprecated code is impossible, an option is to adjust coverage warning thresholds in your continuous integration tools: For projects rich in deprecated code it will be harder to maintain high coverage percentages.

Ignoring deprecated code, the JUnit coverage is 93%.

An Untested Class!

In the non-deprecated code, there was one class not covered by any test:

runners.model.NoGenericTypeParametersValidator. This class validates that @Theories are not applied to generic types (which are problematic due to type erasure).

I easily found the pull request introducing the validator about a year ago. Interestingly, the pull request included a test class clearly aimed at testing the new validator. What happened?

- Tests in JUnit are executed via

@Suites. The new test class, however, was not added to any suite, and hence not executed. - Once added to the proper suite, it turned out the new tests failed: the new validation code was never actually invoked.

I posted a comment on the (already closed) pull request. The original developer responded quickly, and provided a fix for the code and the tests within a day.

Note that finding this issue through coverage thresholds in a continuous integration server may not be so easy. The pull request in question causes a 1% increase in code size, and a 1% decrease in coverage. Alerts based on thresholds need to be sensitive to small changes like these. (And, the current ant-based Cloudbees JUnit continuous integration server does not monitor coverage at all).

What I’d really want is continuous integration alerts based on coverage deltas for the files modified in the pull request only. I am, however, not aware of tools supporting this at the moment.

The Usual Suspects: 6%.

To understand the final 5-6% of uncovered code, I had a look at the remaining classes. For those, there was not a single method with more than 2 or 3 uncovered lines. For this uncovered code, various typical categories can be distinguished.

First, there is the category too simple to test. Here is an example from org.junit.Assume, in which an assumeTrue is turned into an assumeFalse by just adding a negation operator:

public static void assumeFalse(boolean b) {

assumeTrue(!b);

}

Other instances of too simple to test include basic getters, or overrides for methods such as toString.

A special case of too simple to test is the empty method. These are typically used to provide (or override) default behavior in inheritance hierarchies:

/**

* Override to set up your specific external resource.

*

* @throws if setup fails (which will disable {@code after}

*/

protected void before() throws Throwable {

// do nothing

}

Another category is code that is dead by design. An example is a static only class, which need not be instantiated. It is good Java practice (adopted selectively in JUnit too) to make this explicit by declaring the constructor private:

/**

* Protect constructor since it is a static only class

*/

protected Assert() {

}

In other cases dead by design involves an assertion that certain situations will never occur. An example is Request.java:

catch (InitializationError e) {

throw new RuntimeException(

"Bug in saff's brain: " +

"Suite constructor, called as above, should always complete");

}

This is similar to a default case in a switch statement that can never be reached.

A final category consists of bad weather behavior that is unlikely to happen. This typically manifests itself in not explicitly testing that certain exceptions are caught:

try {

...

} catch (InitializationError e) {

return new ErrorReportingRunner(null, e);

}

Here the catch clause is not covered by any test. Similar cases occur for example when raising an illegal argument exception if inputs do not meet simple validation criteria.

EclEmma and JaCoCo

While all of the above is based on Cobertura, I started out using EclEmma/Jacoco 0.6.0 in Eclipse for doing the coverage analysis. There were two (small) surprises.

First, merely enabling EclEmma code coverage caused the JUnit test suite to fail. The issue at hand is that in JUnit test methods can be sorted according to different criteria. This involves reflection, and the test outcomes were influenced by additional (synthetic) methods generated by Jacoco. The solution is to configure Jacoco so that instrumentation of certain classes is disabled — or to make the JUnit test suite more robust against instrumentation.

Second, JaCoCo does not report coverage of code raising exceptions. In contrast to Cobertura, JaCoCo does on-the-fly instrumentation using an agent attached to the Java class loader. Instructions in blocks that are not completed due to an exception are not reported as being covered.

As a consequence, JaCoCo is not suitable for exception-intensive code. JUnit, however, is rich in exceptions, for example in the various Assert methods. Consequently, the code coverage for JUnit reported by JaCoCo is around 3% lower than by Cobertura.

Lessons Learned

Applying line coverage to one of the best tested projects in the world, here is what we learned:

- Carefully analyzing coverage of code affected by your pull request is more useful than monitoring overall coverage trends against thresholds.

- It may be OK to lower your testing standards for deprecated code, but do not let this affect the rest of the code. If you use coverage thresholds on a continuous integration server, consider setting them differently for deprecated code.

- There is no reason to have methods with more than 2-3 untested lines of code.

- The usual suspects (simple code, dead code, bad weather behavior, …) correspond to around 5% of uncovered code.

In summary, should you monitor line coverage? Not all development teams do, and even in the JUnit project it does not seem to be a standard practice. However, if you want to be as good as the JUnit developers, there is no reason why your line coverage would be below 95%. And monitoring coverage is a simple first step to verify just that.

One thing that made this course special was Erik sharing his extensive experience in API design. He explained the actual tradeoffs he and his team made in the design of Rx — for example when deciding that subscribing to an

One thing that made this course special was Erik sharing his extensive experience in API design. He explained the actual tradeoffs he and his team made in the design of Rx — for example when deciding that subscribing to an